Listening is the Black Sheep of Language Learning Skills Posted by Transparent Language on Jul 30, 2018 in For Learners, Learning + Usage Tips

When learning a language, there’s one skill that’s more neglected than the others. It’s underestimated both in terms of its complexity and its utility. It’s less glamorous than speaking, and more difficult to master than reading. Listening, unfortunately, is the black sheep of language learning.

A recently-posted discussion thread on Quora began with the question, “Why is listening so difficult for people studying Spanish?”[1].

Although, in this case, the question was posed by a learner of Spanish, the sense that listening is a far more difficult skill to master than reading is a feeling shared by many language learners across the full spectrum of languages, and for good reason.

Angie Torre, an experienced AP Spanish teacher, hosts a blog with tips for teaching AP Spanish Language and Culture, in which she notes that “the listening portion of the test is the most difficult part for non-native and non-heritage speakers,”[2] an observation that is supported by AP score-reporting data showing that sub-scores for Listening are regularly lower than those for reading across a wide range of languages tested.[3]

Why do language learners struggle with listening more than other skills?

The problem comes in the fact that even though listening, as a skill, is much more complex than reading, most classroom-based listening activities fail to move beyond the kinds of highly simplified and inauthentic representations of spoken language that mask most of those complexities.

As John Field notes in his book Listening in the Language Classroom, one of the biggest challenges for learners is the gap between a word’s careful, isolated form and the shape it takes in connected speech. That gap isn’t just a classroom problem—it mirrors the way terms in Wisconsin online sports betting look straightforward in legislation but shift once they’re used in everyday conversations, leaving plenty of room for interpretation.[4]

A survey of the skill level descriptors for the receptive skills of reading and listening across a number of the most reputable proficiency scales, including the CEFR, ACTFL, and the ILR, would seem to suggest that there is very little difference between these two skills. Consider, for example, the following can-do statements from the CEFR’s self-assessment grid for level A2.

Reading A2

I can read very short, simple texts. I can find specific, predictable information in simple everyday material such as advertisements, prospectuses, menus and timetables and I can understand short simple personal letters

Listening A2

I can understand phrases and the highest frequency vocabulary related to areas of most immediate personal relevance (e.g. very basic personal and family information, shopping, local geography, employment). I can catch the main point in short, clear, simple messages and announcements

One could almost get the impression that listening is just reading done with the ears, rather than with the eyes, when nothing could be further from the truth. The problem with these proficiency descriptors, and others like them, is that they focus more on the ways that listening and reading are alike than on the ways that they are different. This tendency to downplay the unique challenges of listening has led some to refer to listening as the red-headed stepchild of language proficiency.

Is listening really all that different from reading?

A student studying Spanish posted in the Quora discussion thread highlighting just two of the many ways in which listening is immensely more complex, and therefore more difficult to master, than reading. “One problem I had was not being able to tell where one word started and another ended, especially when they talked fast.” This feeling of being unable to keep one’s head above water when thrown into a rapidly moving stream of unbroken speech is not uncommon for language learners, especially in the early stages of acquisition.

A good illustration of just how much more complex and demanding listening is than reading can be found in the news ticker that crawls across the bottom of the screen on most cable news broadcasts (sometimes called a chyron).

There’s no way to re-read or pause when listening in real time.

The fact that the text moves across the screen at a rate that can be challenging even for some native speakers, let alone non-native speakers, and then disappears into the ether never to be seen again, makes this kind of reading a significantly more challenging undertaking than reading the exact same information would be if it were encountered as static text on the page of a newspaper, where it can not only be read at a pace that is comfortable for the reader, but where it remains available for re-reading or review as the reader works their way through the text, constantly self-monitoring for correct understanding of the author’s communicative intent.

To some extent at least, the experience of reading a scrolling news ticker mimics two of the most basic features that distinguish listening from reading. Unlike the written word, the spoken word is ethereal, which is to say that it disappears as quickly as it is spoken; and in most cases the “space” that it occupies is immediately filled by the ongoing stream of speech that follows. This stream-of-speech phenomenon places a significant burden on the cognitive processing and short-term memory capacity of the listener. There is no re-reading. There is no review. It’s gone. And if a misunderstanding – or mis-hearing – occurs, the trickle-down effect can be disastrous, especially when the language learner is asked to process a rate of speech that is intended for native speakers.[5]

There are no visual clues (spaces, punctuation, etc.) when listening.

Now, let’s try to imagine how the experience might become even more challenging if we were to modify our crawling news ticker to reflect another way in which the spoken word is different from the written word by removing the capitalization, the punctuation, and all of the spaces between the words. Can you read the modified news ticker at the bottom of this screen shot?

How long do you have to stare at it before the word boundaries start to emerge and the entire sentence begins to make sense?[6] Now try to imagine that it was scrolling by at a rate of 8 characters per second! As the student who posted on Quora observed, it can be extremely challenging for non-native listeners to figure out where one word ends and another word starts. And when the difficulty is compounded by a rate of speech that has not been slowed down to accommodate the needs of the learner, the task can seem overwhelming.

Listeners are exposed to many different accents, voices, and ways of speaking that aren’t present in the written word.

Let’s add another wrinkle to our scrolling news ticker by changing the block print to messy handwriting to simulate the differences in the regional accents and idiosyncratic vocal qualities of the various speakers we listen to on a daily basis.

![]()

As native speakers, we learn to cope with a much wider variety of accent, clarity, rate of speech, and vocal pitch and timbre than we realize. Some people’s voices have more of a nasal quality, while others have a deep pitch and a gravelly quality; some people speak very softly, while others speak quite loudly; some people’s articulation is more slurred, while others’ is clear and precise. All of these differences matter much more to the non-native listener than we might think. In fact, research has shown that even something as simple as the difference in pitch and timbre between the voice of a man and a woman, or a child and an adult, can present significant challenges for a non-native listener encountering a new speaker for the first time. [7]

Printed in a standard font, with appropriate word spacing, punctuation, and capitalization, the above sentence is quite easy to read: “White House: Trump opposes Putin’s request to interview current and former American officials.” I would wager, however, that, even if you are a native speaker of English, you had to struggle mightily to make sense of the modified version of the example presented above. Unfortunately for the non-native listener, the normal speech of native speakers, no matter the language, is nowhere near as clear or as standardized as the printed word, resembling the former version of this example much more closely than the latter version.

Finally, let’s make one last modification to our scrolling news ticker by eliminating standardized spelling in order to simulate the different pronunciations that virtually any word can have due not only to variations in the accent of the speaker, but to variations in its immediate phonetic context.[8]

For example, the /p/ at the end of the word stop has a very different phonetic realization in the one-word exclamation, “Stop!”, than it does in the sentence, “Please stop that.” In the first case, the /p/ is aspirated. Put the palm of your hand directly in front of your lips and say the phrase “Stop it!” You’ll feel a little puff of air that is characteristic of aspirated pronunciation. However, when you say, “Would you please stop that”, you’ll only feel that little puff of air at the beginning of the word please, but not at the end of the word stop.

Most of the phonemes in any language are subject to this kind of phonological variation, resulting in a variety of different pronunciations for the same word. While these phonological variants present little if any problem at all for native listeners, by whom they go largely unnoticed, they can present a very real challenge for non-native listeners, such that the listener may not even recognize a word they’ve already “learned” when its pronunciation changes from one context to another.

Imagine what the cumulative effect of all these factors would be, then, on the news ticker crawling along the bottom of your TV screen if it was written in a messy, hand-written script with no spaces between the words, no capitalization, no punctuation, and irregular spelling, scrolling quickly across your screen where it disappears more quickly than you can make sense of it, and you begin to have a sense of how much more complex listening is than reading, especially for the beginning language learner. A simple headline like, “Huge fire creeps closer to Yosemite”, suddenly becomes quite a challenge, especially for the beginning language learner.

And as if all of this weren’t enough, there are a number of additional complicating factors that I haven’t even mentioned yet, such as the kind of background noise you might encounter at a cocktail party or on a busy street, creating gaps in the speech stream that the listener has to fill in; the tendency of interlocutors to interrupt or talk over one another; the poor signal quality of a flight-departure announcement that you might here at the airport; the false starts, self-repairs, fillers (e.g., ummm…), and unfinished utterances that are all extremely common in spontaneous speech; or the frequent use of reduced forms such as “imgunna” (I’m going to) or “shooda” (should have). None of these has a corollary in standard written texts, and yet they essentially define natural and authentic spoken language.

Listening and speaking are inseparable skills.

When most people think about learning another language, speaking seems to be the skill that first comes to mind. We ask people how many languages they speak, not how many languages they read – and certainly not how many languages they can listen in! When we think about traveling to a foreign country, the first thing many of us think about buying is a phrase book that will teach us how to order dinner in a local restaurant, ask for directions, or talk to shop vendors in the market.

The problem is that when you walk out of the hotel and ask someone on the street for directions to the museum, they’re likely to answer you! And if you haven’t developed your listening skills, the conversation is likely to come to a screeching and unsatisfying conclusion. The reality is that, while reading and writing are, by nature, solitary activities – and the same can even be said for much of the listening that we do – speaking is almost always interactive, meaning that it cannot be separated from listening. In the real world, people carry on conversations that are equal parts speaking and listening, and that develop spontaneously. That is why memorized dialogs won’t get you very far – that and the fact that real people don’t speak to each other using the citation form you’re used to hearing in your dialog. This is why time spent developing your listening skills is not only beneficial for its own sake, but as an essential an inseparable element of developing your conversational skills.

How can you improve your listening proficiency?

While there are no silver bullets that will suddenly or magically simplify the task of listening in another language, there are several practical strategies that can help to build this essential skill.

Select materials that offer lots of support.

When you’re searching for authentic materials that you can use in listening practice, you’ll give yourself a huge advantage if you limit your search to video sources on familiar topics and concentrate on highly predictable and straightforward text types such as simple illustrated stories or instructional videos.

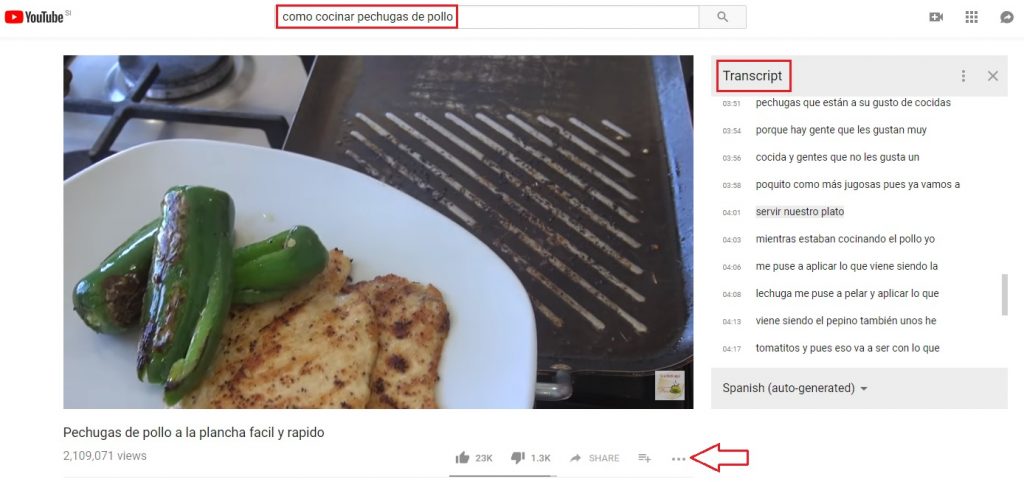

Conducting these kinds of searches on YouTube isn’t difficult. Look up the translations for key terms like instructions, directions, or how to make, and then use these terms to search for instructional videos that teach you how to do something you already know how to do and for which you already know some of the key vocabulary. You’ll find thousands of videos showing you how to do everyday activities like how to change a tire or how to make an omelet. The fact that the topic is already familiar and the video provides real-time visual support as the speaker moves sequentially and predictably through the steps they are teaching will make it much easier for you to understand than if you were simply listening to a news broadcast on the radio, where the topic changes every 10-20 seconds and there are no visual cues to help you follow along.

Interviews are another type of audio/video that offer a different kind of support: redundancy. The interviewer defines the topic and introduces key vocabulary with her question. The cooperative interviewee then reiterates much, if not all, of the question in his response, albeit with varying degrees of modification to the vocabulary, phraseology, and grammar. This kind of redundancy, along with the fact that each of the interviewer’s questions define and limit the topic for the next response in advance, goes a long way towards simplifying the listening task without sacrificing any of the authentic aspects of natural speech that are usually stripped out in materials that are created especially for language learners.

Be sure to use only authentic materials, which means that they were created by native speakers for native speakers. This way, you’re more likely to encounter natural rates of speaking, reduced forms, fillers, and background noise—all the challenges that will present themselves in real-life listening scenarios.

Take advantage of technology.

Practicing in real time with another speaker is ideal preparation, but this is not always practical or feasible. Fortunately, technology is readily available online that can not only simulate, but even enhance, the experience. A conversation partner will quickly tire of repeating the same thing over and over again, but YouTube doesn’t care how many times you press that rewind button!

Take advantage of the tools available on these sites. Rewind and replay videos as often as you need. Use subtitles to help fill in the gaps in your listening, to familiarize yourself with variant pronunciations, or to identify and learn reduced forms. YouTube videos with subtitles have a CC icon underneath, and you can filter for captioned videos.

In fact, the more […] icon often hides an option to read, and even print, the entire time-stamped transcript of a closed captioned YouTube video, and the settings icon lets you control the playback speed. While setting the playback speed to 0.50 does introduce a fair amount of distortion, it can make it much easier to identify word boundaries; setting the playback speed to 0.75 eliminates most of the audio distortion, but still makes it easier to follows along and keep up. The best approach is to take a first pass through at full speed, and then listen several more times at increasingly faster reduced speeds, working your way back up to full speed, until you can finally achieve full comprehension at full speed and with the closed captions turned off.

Take advantage of fillers and redundancy.

The extensive use of fillers (ummm…, ya know…) and redundancy are two of the most noticeable features that distinguish authentic spontaneous speech from the written word.[9] As a conversation develops, speakers need time to formulate their thoughts but they don’t want to surrender the floor to another speaker, so they fill the empty space with meaningless fillers like ummm…, ya know…, like…, and so… . Learning to identify these fillers, and to distinguish them from meaningful content, can not only help the you to avoid unnecessary confusion, but can also give you valuable time to process what has just been said, or to get caught up if you’ve fallen slightly behind.

Redundancy – and not just the kind found in a structured interview – is another characteristic feature of spontaneous speech that can provide the listener with valuable processing time. Field notes that “Spoken discourse is often repetitive, with the speaker reiterating, rephrasing, or revisiting information that has already been expressed.”[10] Listeners are constantly making judgments about the information they receive. “They select some, they omit some and they store some in reduced form.”[11] Learning to recognize redundancy and, when appropriate, to ignore it, will reduce your cognitive load as a listener and make the listening task that much more manageable.

Increase the quantity—and quality—of listening you do.

For more listening practice, swap text materials for audio materials. It’s important to recognize, however, that putting on a tape during your commute or playing a movie in the background and hoping you absorb it is not enough. Listen more, but also listen actively.

Do not choose sources that are much too difficult for you. In the gym, you shouldn’t lift weights that are too heavy for you to still use proper form. The same concept applies to listening proficiency—there’s nothing embarrassing or wrong about watching children’s shows or listening along to a young adult audio book if that’s an appropriate match for your vocabulary and grammar knowledge. Listening to a formal news broadcast in the target language won’t make you a better listener if you’re still struggling with informal daily conversations at the grocery store. Search for materials at the right level and familiarity.

As you progress, you can listen to a variety of sources. Consuming many sources—from children’s shows to news channels, radio stations, podcasts, videos, films, and so on—will expose you to natural speech in all forms: formal reporting, informal conversations, slang, interruptions, etc. It’s important to vary resources as well. It’s easier to become familiar with one particular voice, but that won’t help you when speaking with someone who is far more soft-spoken or has a slightly different accent.

Finally, build other skills and knowledge in tandem with listening. The components of a language (vocabulary size, grammar knowledge, listening and speaking skills, as well as reading and writing skills) are much like the different mathematical functions. It’s impossible to do the hard stuff like long division without knowing the simple stuff like addition and subtraction. Build vocabulary with apps that provide audio, read along to a physical copy while listening to an audiobook, etc.

[1] https://www.quora.com/Why-is-listening-so-difficult-for-people-studying-Spanish

[2] https://bestpowerpointsforspanishclass.com/ready-ap-spanish-language-culture-test/

[3] https://twitter.com/AP_Trevor; https://www.totalregistration.net/AP-Exam-Registration-Service/AP-Exam-Score-Distributions.php?year=2014

[4] Field, J. 2008. Listening in the Language Classroom, Cambridge University Press, 141

[5] Buck, Gary. 2001. Assessing Listening. Cambridge University Press, p. 6

[6] The original text for the modified news ticker in the screen shot reads, “GOP push to extend tax cuts meets resistance in Senate.”

[7] Field, J. 2008. Listening in the Language Classroom, Cambridge University Press, 158

[8] Oakeshott-Taylor, John. 1980. Acoustic Variability and its Perception: The effects of context on selected acoustic parameters of English words and their perceptual consequences. Frankfurt am Main: Verlag Peter D Lang.

[9] Baird, Albert and Knower, Franklin. 1963. General Speech, an Introduction. New York, McGraw Hill, 136-137

[10] Field, J. 2008. Listening in the Language Classroom, Cambridge University Press, 243

[11] ibid

Build vocabulary, practice pronunciation, and more with Transparent Language Online. Available anytime, anywhere, on any device.

Comments:

Helen Berg:

Immersion is the best way to learn a language. That said, the clasrroom or on-line study is the best way to learn grammar. However, Transparent Language is the best language learning software because listening to the pronunciation of a word is readily accessible with Transparent Language.? I love the variety of presentations and applications associated with Transparent Granmar! Transparent Grammar makesvlanguage learning fun and interesting.

Thomas Kopf:

Listening is a really difficult skill. Reading the article, I suddenly remembered how as a child I tried to understand the words of a popular song and sing it. When I found the lyrics of that song on the Internet, it turned out that it had completely different words and a different meaning.

I think it is right that when you learn a language you pay so much attention to listening, both in quantity and quality.